Vibe Coding Weekly #25

Vibe Coding Weekly is your definitive source for staying current with the latest trends, tools, and techniques that are transforming the development landscape.

Happy Monday!

Welcome to edition #25 of Vibe Coding Weekly.

This week in one satisfying refactor:

The Acquisition: OpenAI bought Astral — makers of uv, Ruff, and ty — to supercharge Codex. When you buy the tools that Python developers already can’t live without, you’re not building a moat. You’re buying one.

The Model Wars: Three model families dropped in one week — GPT-5.4 mini/nano, Mistral Small 4, and Xiaomi’s MiMo-V2-Pro — and the real story is the subagent era: models designed not to talk to humans, but to other models

The Gatekeeping: Apple blocked App Store updates for Replit and Vibecode, citing rules against apps that change functionality post-review. The vibe coding dream just hit its first platform wall

The Correction: Microsoft started pulling Copilot out of Windows apps after users called it bloat. Even Microsoft admits: AI should only go where it’s “genuinely useful”

The Compensation Shift: Jensen Huang proposed giving engineers token budgets worth half their salary. The NYT reported engineers competing on internal “tokenmaxxing” leaderboards. Tokens are becoming the fourth pillar of compensation — or the most creative way to double your output expectations

Free for subscribers!

Change Management in Agentic AI Adoption — A practical framework for making AI adoption actually work inside your team.

Plus every week: curated tools, articles, and insights on AI-powered software development.

Key Takeaways

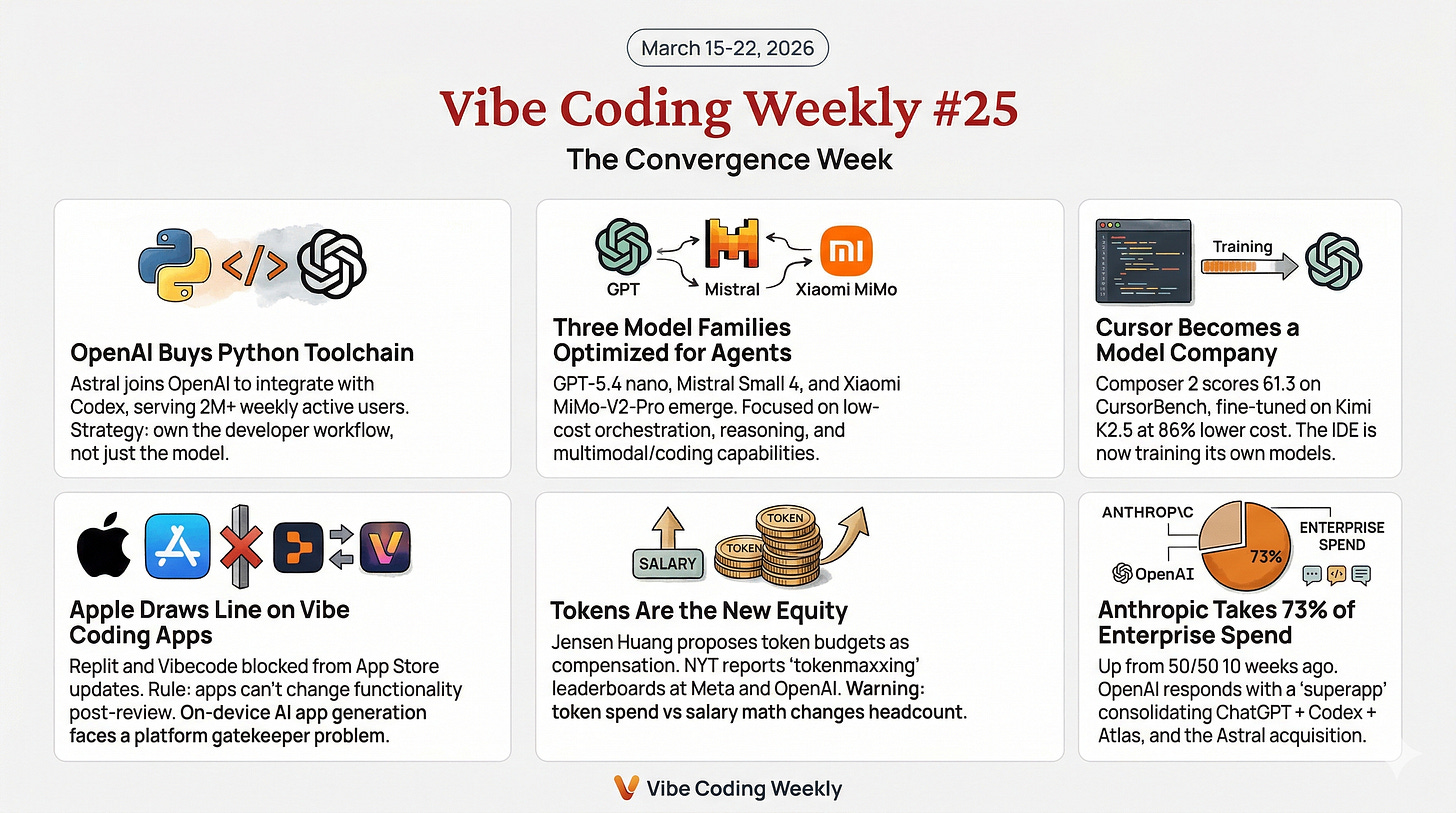

OpenAI buys the Python toolchain: Astral (uv, Ruff, ty) joins OpenAI to integrate with Codex. 2M+ weekly active Codex users. The strategy: own the developer workflow, not just the model

Three model families in one week, all optimized for agents: GPT-5.4 nano at $0.20/M tokens for subagent orchestration, Mistral Small 4 unifying reasoning + multimodal + coding under Apache 2.0, and Xiaomi’s MiMo-V2-Pro emerging from stealth with 1T params at 1/6th frontier cost

Cursor becomes a model company: Composer 2 scores 61.3 on CursorBench (vs Opus 4.6 at 58.2), fine-tuned on Kimi K2.5 at 86% lower cost than its predecessor. The IDE is now training its own models

Apple draws the line on vibe coding apps: Replit and Vibecode blocked from App Store updates. The rule: apps can’t change functionality post-review. The implication: on-device AI app generation faces a platform gatekeeper problem

Tokens are the new equity: Jensen Huang proposes token budgets as compensation. NYT reports “tokenmaxxing” leaderboards at Meta and OpenAI. TechCrunch warns: when your token spend approaches your salary, the math on headcount changes

Anthropic takes 73% of first-time enterprise spend: Up from 50/50 with OpenAI just 10 weeks ago. OpenAI responds with a “superapp” consolidating ChatGPT + Codex + Atlas browser, and the Astral acquisition

📦 Releases & News

OpenAI Acquires Astral to Supercharge Codex for Python Developers

OpenAI announced it will acquire Astral, the company behind uv (package manager), Ruff (linter), and ty (type checker) — three open-source tools that have become foundational to modern Python development. The plan: integrate Astral’s tooling and engineering expertise directly into Codex, which now has over 2 million weekly active users and 5x usage growth since the start of the year. OpenAI commits to maintaining Astral’s open-source products post-acquisition. The strategic context is telling — with Anthropic capturing 73% of first-time enterprise AI spending (per Ramp/Axios data, up from 50/50 just ten weeks ago), OpenAI is shifting from model competition to developer workflow ownership.

OpenAI Releases GPT-5.4 Mini and Nano

OpenAI’s smallest GPT-5.4 variants are designed for the subagent era — lightweight models that handle classification, routing, and tool selection as part of multi-agent orchestration. GPT-5.4 mini ($0.75/$4.50 per million tokens) outperforms GPT-5 mini on coding, reasoning, and multimodal tasks while running 2x faster. GPT-5.4 nano ($0.20/$1.25) is cheaper than Gemini 3.1 Flash-Lite and, as Simon Willison demonstrated, can describe every photo in a 76,000-image collection for $52.44. Mini is now available to free ChatGPT users; nano is API-only. The pricing signals where OpenAI sees the volume: not in human conversations, but in the millions of model-to-model calls that power agentic workflows.

Mistral Releases Small 4: One Model to Unify Them All

Mistral Small 4 is a 119B-parameter Mixture-of-Experts model with 128 experts and just 6B active parameters per token, released under Apache 2.0. It’s the first Mistral model to unify Magistral (reasoning), Pixtral (multimodal), and Devstral (agentic coding) into a single engine. The standout feature: configurable reasoning effort — set it to “none” for fast chat-style responses, “high” for deliberate step-by-step reasoning. Performance: 40% latency reduction and 3x more requests per second versus Small 3. Available via Mistral API, Hugging Face, and vLLM. Also shipping alongside it: Leanstral, a specialized model for the Lean 4 formally verifiable programming language.

Xiaomi Reveals MiMo-V2-Pro — The “Hunter Alpha” Mystery Model

Xiaomi officially unveiled MiMo-V2-Pro, a 1-trillion parameter reasoning-oriented model with 42B active at inference and a 1M context window. The backstory is the real headline: the model first appeared anonymously as “Hunter Alpha” on OpenRouter in early March, quickly topping usage charts and processing over 1 trillion tokens during its stealth phase before anyone knew it was Xiaomi. Performance approaches GPT-5.2 and Opus 4.6 at approximately 1/6th the cost. The MiMo division is led by Luo Fuli, a former core contributor to DeepSeek’s breakthrough models. Alongside MiMo-V2-Pro, Xiaomi released MiMo-V2-Omni (multimodal) and MiMo-V2-TTS (text-to-speech). China’s hardware companies are now frontier model companies.

Cursor Ships Composer 2 — Its Own Coding Model

Cursor released Composer 2, an in-house coding model fine-tuned on Kimi K2.5 (from Moonshot AI). On CursorBench, it scores 61.3 — a massive jump from Composer 1.5 (44.2) and competitive with Claude Opus 4.6 (58.2) and GPT-5.4 Thinking (63.9). Pricing represents an 86% cost reduction: Standard at $0.50/$2.50 and Fast at $1.50/$7.50 per million tokens, with a 200K token context window. The move transforms Cursor from an IDE that wraps other companies’ models into a company that trains its own. It also validates a broader trend: fine-tuning open models (Kimi K2.5 is Chinese open-source) for specific domains can match or beat frontier models at dramatically lower cost.

Apple Blocks Updates for “Vibe Coding” Apps

Apple has quietly prevented Replit and Vibecode from releasing App Store updates, citing guideline 2.5.2 (apps can’t execute code that changes their functionality) and 3.3.1(B) (interpreted code can’t change an app’s primary purpose). The core problem: vibe coding tools let users create entirely new applications through AI prompts, effectively making the app something different after it passes review. The proposed solution: apps open generated content in an external browser rather than an in-app preview. Apple says it has no rules specifically against vibe coding, but the existing guidelines land squarely on the category. The irony: Apple itself has embraced vibe coding in Xcode, adding integration with OpenAI and Anthropic’s agentic tools.

Claude Code Ships v2.1.78–v2.1.81: Channels, Remote Control, and Plugin State

Four releases in four days continue Claude Code’s relentless shipping pace. The headline feature: --channels (research preview), allowing MCP servers to push messages to Claude Code sessions — a shift from pull-based tool calls to event-driven agent communication. Other highlights: --bare flag for scripted pipeline calls (skips hooks, LSP, plugin sync), /remote-control for bridging VS Code sessions to claude.ai/code, plugin persistent state via ${CLAUDE_PLUGIN_DATA}, and effort frontmatter for skills and slash commands. Multiple fixes landed for voice mode WebSocket failures, --resume dropping parallel tool results, and large session truncation (>5MB). The StopFailure hook event now fires on API errors, enabling automated recovery workflows.

Anthropic Captures 73% of First-Time Enterprise AI Spending

According to Ramp customer data analyzed by Axios, Anthropic now captures 73% of all spending among companies buying AI tools for the first time — up from a 50/50 split with OpenAI just ten weeks ago, and 60/40 in OpenAI’s favor as recently as early December. The shift is driven by Claude Code’s bottom-up developer adoption and enterprise-friendly pricing. OpenAI’s response is a two-pronged strategy: the Astral acquisition for developer toolchain ownership and a planned “superapp” merging ChatGPT, Codex, and the Atlas browser into a single desktop experience, led by president Greg Brockman.

📚 Tutorials & Resources

The New Stack: GPT-5.4 Nano and Mini Are Built for the Subagent Era

The New Stack’s analysis frames GPT-5.4 nano not as a cheaper chatbot but as infrastructure for multi-agent orchestration. In a typical agentic workflow, a frontier model (Opus 4.6, GPT-5.4) acts as the orchestrator while dozens of lightweight subagents handle classification, routing, data extraction, and tool selection. At $0.20 per million input tokens, nano makes it economically viable to run hundreds of subagent calls per session without the cost dominating the orchestrator’s budget. The article draws a direct line from OpenAI’s pricing strategy to the architecture it’s betting on: not one model to rule them all, but a hierarchy of specialized models at different price points working together.

💡 Others

Are AI Tokens the New Signing Bonus — or Just a Cost of Doing Business?

The convergence of two stories this week created a singular narrative about AI compensation. Jensen Huang proposed at GTC that engineers should receive token budgets worth half their base salary — compute credits they can spend to deploy AI agents as productivity multipliers. The New York Times reported on “tokenmaxxing“ — engineers at Meta and OpenAI competing on internal leaderboards that track token consumption as a status indicator. Tomasz Tunguz of Theory Ventures predicts tokens will become the fourth pillar of engineering compensation alongside salary, bonus, and equity. But TechCrunch’s Connie Loizos poses the uncomfortable question: if a company’s token spend per employee approaches that employee’s salary, the implicit expectation is to produce at twice the rate — and the financial logic of headcount starts to look very different to the finance team.

Microsoft Rolls Back Copilot “Bloat” in Windows

Microsoft is removing Copilot integration points from Photos, Widgets, Notepad, File Explorer, and the Snipping Tool after sustained user criticism that the AI features felt “forced, difficult to remove, and not always useful.” The Copilot icon has been removed from the taskbar by default, and planned deeper integrations in Settings, notifications, and File Explorer have been scrapped. A corporate VP stated that Copilot will no longer be “shoved everywhere“ — AI will only appear where it’s “genuinely believed to bring benefit.” The reversal is a data point for every platform team: aggressive AI integration without clear user value creates backlash, not adoption. The question is whether this lesson transfers to AI coding tools, where the value proposition is clearer but the “everywhere at once” temptation is the same.

OpenAI Plans Desktop “Superapp” Merging ChatGPT, Codex, and Atlas

OpenAI is consolidating ChatGPT, Codex, and its Atlas browser into a single Mac desktop application. President Greg Brockman will oversee the transition, with Fidji Simo leading enterprise sales. The motivation is partially competitive: Anthropic now captures 73% of first-time enterprise AI spending, and OpenAI’s product fragmentation (standalone ChatGPT, standalone Codex, standalone browser) is seen as a liability. The company also introduced Skills — reusable, shareable workflows that bundle instructions, examples, and code for ChatGPT to apply automatically. No timeline was given, and the mobile ChatGPT app remains unchanged. The subtext: when your biggest competitor is winning with a single, focused product (Claude/Claude Code), consolidation becomes existential.

That’s a wrap for this week!

The meta-narrative is convergence — and not just of products:

OpenAI is merging apps, acquiring toolchains, and building models specifically for subagent hierarchies.

Cursor is training its own models.

Xiaomi is running frontier models in stealth on public infrastructure.

Mistral is unifying three model families into one.

The era of single-purpose tools is ending. The era of single-purpose models might be ending too. What’s replacing both is systems — orchestrated, layered, economically optimized — where the value isn’t in any one model but in the architecture that connects them.

Even compensation is converging: tokens, salary, equity, and output expectations are collapsing into a single equation that companies are still learning to balance.

Stay tuned for next week’s edition.

Vibe Coding Weekly is your definitive source for staying current with the latest trends, tools, and techniques that are transforming the development landscape.

In each update, you’ll receive:

Deep dives into new technologies and emerging frameworks

Optimized code patterns that enhance both efficiency and readability

Curated tools and resources that will supercharge your workflow

Community insights that are defining the future of development

Our goal is to provide you with concise, relevant, and actionable information that you can immediately apply to your projects.

Clean code and positive vibes,

The Vibe Coding Team

Super interesting update. The Apple decision is definitely impactful.

Such a great post.